- Home

- Media Center

-

Events

- Wuzhen Summit

- Regional Forums

- Practice Cases of Jointly Building a Community with a Shared Future in Cyberspace

- World Internet Conference Awards for Pioneering Science and Technology

- The Light of Internet Expo

- Straight to Wuzhen Competition

- Global Youth Leadership Program

- WIC Distinguished Contribution Award

- Membership

- Research & Cooperation

- Digital Academy

-

Reports

- Collection of cases on Jointly Building a Community with a Shared Future in Cyberspace

- Collection of Shortlisted Achievements of World Internet Conference Awards for Pioneering Science and Technology

- Reports on Artificial Intelligence

- Reports on Cross—Border E—Commerce

- Reports on Data

- Outcomes of Think Tank Cooperation Program

- Series on Sovereignty in Cyberspace Theory and Practice

- Other Achievements

- About WIC

- 中文 | EN

DeepSeek releases upgrade to its latest non-reasoning AI model

DeepSeek announced the release of an upgraded version of its V3 model on Tuesday, which includes improvements in areas like front-end development, Chinese writing and tool-use capabilities that are not reliant on complex multi-step reasoning.

[Photo/Deepseek]

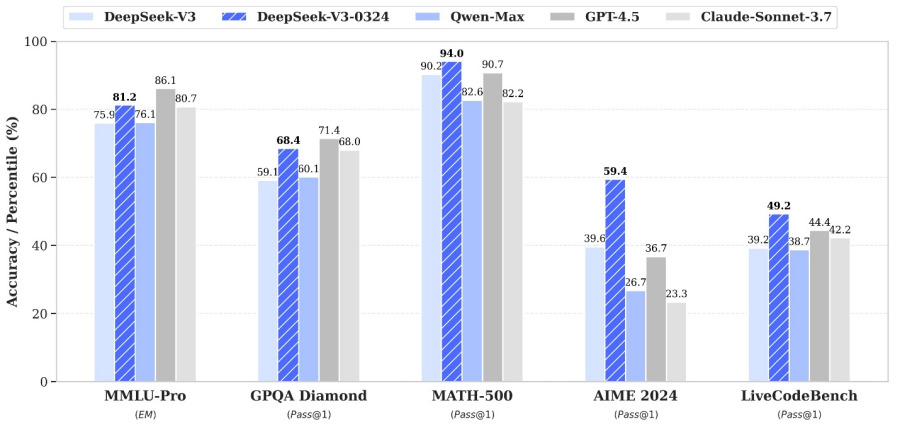

According to the latest benchmark data released by DeepSeek, V3-0324 outperforms the original V3 in multiple categories. On the MMLU-Pro benchmark, which tests general knowledge and reasoning, accuracy rose from 75.9 percent to 81.2 percent. The GPQA Diamond benchmark, which evaluates models on graduate-level questions across a range of scientific disciplines, saw a jump from 59.1 percent to 68.4 percent. Coding proficiency of the newer model has also increased, with LiveCodeBench results moving from 39.2 percent to 49.2 percent. These benchmark results reflect the new model’s precision and reliability across a range of academic and practical tasks.

The update also strengthens the model’s front-end development abilities, with more aesthetically refined and executable outputs for both websites and game interfaces. In Chinese writing, the model now generates content that aligns more closely with the DeepSeek R1 writing style, with noticeable improvements in medium to long-form content quality.

Some other areas of improvement include better performance in interactive rewriting, enhanced translation and letter-writing features, and more detailed responses to Chinese-language search queries.

For users engaging in non-complex reasoning tasks, DeepSeek recommends using V3 with the “DeepThink” mode turned off, maintaining efficiency without sacrificing output quality.

The World Internet Conference (WIC) was established as an international organization on July 12, 2022, headquartered in Beijing, China. It was jointly initiated by Global System for Mobile Communication Association (GSMA), National Computer Network Emergency Response Technical Team/Coordination Center of China (CNCERT), China Internet Network Information Center (CNNIC), Alibaba Group, Tencent, and Zhijiang Lab.